News flash! There’s this thing called Artificial intelligence (AI) and it can help you do stuff!! As an educator, since ChatGPT emerged in November 2022, I have been intrigued by the possibilities of integrating AI into my teaching practice and getting students hands on with these amazing tools.

Let’s be clear about what I mean by AI. Although it has a very broad definition (for example, a spell checker is a version of AI), in this post I am focusing on generative AI. Generative AI tools can create new text, images, code, and other outputs based on the data they are trained on. When a user prompts the tool, it uses its knowledge to produce a response. These responses are original, but they come from the information the AI processed during training.

One of the best resources I have seen recently is The North Carolina Department of Public Instruction (NCDPI) guidebook for the use of AI in schools. It serves as a valuable resource for teachers by providing a comprehensive overview of the potential benefits and challenges of using AI in schools, as well as practical guidance on how to implement AI technologies in a responsible and ethical manner. The guidebook covers a wide range of topics, such as the types of AI technologies available, discussions on the legal and ethical considerations of AI use in schools, and strategies for enhancing student learning outcomes with AI.

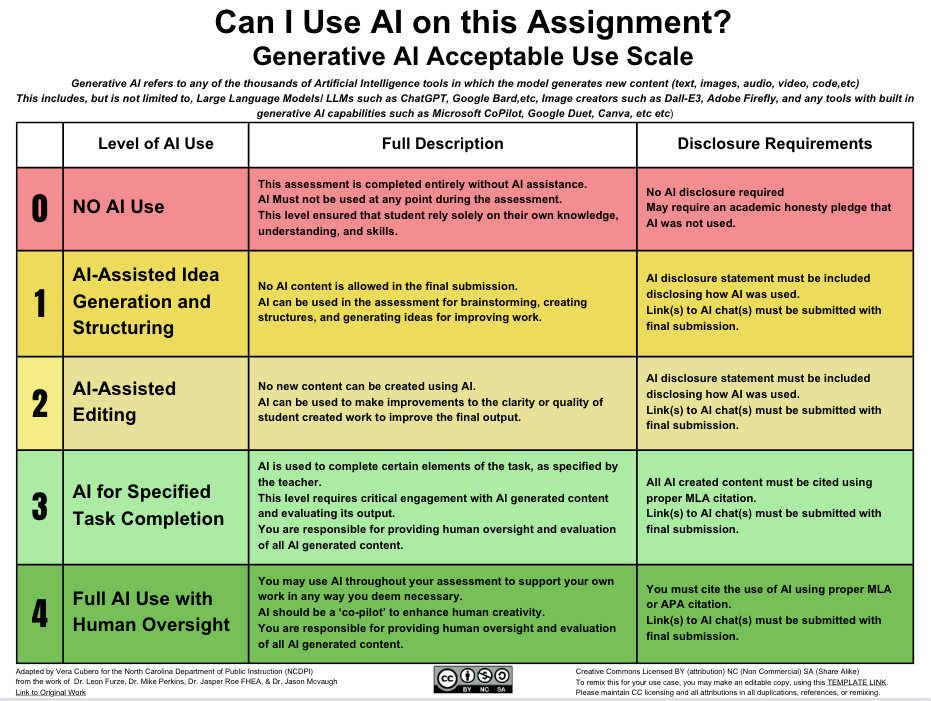

A good way to think about generative AI tools in education is as a complementary tool that enhances learning without replacing human educators. Think of AI as being like an electric bike, which amplifies human effort, rather than a robot vacuum, which operates entirely on its own. Taking this metaphor further, this image explains appropriate use.

The guidebook goes on to give advice on selection of AI tools. When choosing AI tools for schools, factors such as capabilities and limitations, bias mitigation, student privacy, human oversight, and accessibility should be considered. We should deliberately teach students about these aspects of using GenAI tools, so we can avoid spending unnecessary energy trying to catch students from inappropriate use.

Post plagiarism: writing in the age of AI

Seemingly, the main concern about student use of AI revolves around authenticity. Authenticity, in this case defined by NZQA, is “the assurance that evidence of achievement produced by a learner is their own.” Dr. Sarah Elaine Eaton argues that AI will fundamentally change how we view writing, authenticity and plagiarism. In her concept of a “post-plagiarism world,” humans and AI will collaborate on writing, leading to a rise in hybrid writing styles. However, humans will still be responsible for the content created, including fact-checking and the ethical use of AI tools. While traditional plagiarism definitions may not directly apply to AI-generated text, because AI can generate unique text that is not copied from another source, the core idea of attribution and respect for sources will remain important. Overall, AI will have a significant impact on writing in the future. However, it is important to remember that AI is a tool that can be used to enhance human creativity, not replace it.

A possible solution is to give learners clear guidelines on appropriate use in assigned tasks. If we can clearly explain to learners how and when to use these tools, they will be better at making deliberate choices for themselves. Here is the suggested scale:

Using AI Tools with Students: Navigating Age Restrictions

The number of tools targeted at educators is overwhelming. Check out the AI Educator Tool repository. One challenge educators encounter when using AI tools with students is the complexity of navigating age restrictions due to privacy and data protection regulations. While AI technology offers immense benefits in terms of efficiency and effectiveness, there are often age restrictions in place due to privacy concerns and data protection regulations. As educators, it is crucial to be mindful of these restrictions.

So, having to be selective, here is a summary of the different tools I have used with learners:

One important consideration is accessibility. In particular, can the use of the tool in class comply with the tool’s terms of service? The main one is age restriction. As educators, it is essential to navigate these age restrictions responsibly and find ways to incorporate AI tools into our teaching practices that meet the terms of use and are appropriate for learners.

I had made initial use of the Codebreaker.edu tool, as students were not required to sign in with an account. Despite being limited to 2000 character inputs, the tool is valuable for generating basic prompts and text-based outputs. However, the Terms of Service state that it uses a custom interface of OpenAI’s GPT-3, which implies that users must be 13 or older with parental permission.

Recently, Magic School.ai released a student tool called Magic Student. This tool doesn’t seem to have a strict 13+ age requirements, provided your school has notified parents/guardians.The terms of use state that the tool does protect the privacy of younger children and is compliant with regulations such as the Children’s Online Privacy Protection Act (COPPA) in the United States. The interface appears to be a walled garden, with a teacher being able to see how students are using the prompts.

Intrigued by this, I dove into the online training offered by Magic School.ai and became a Magic School AI Pioneer. This experience not only enhanced my understanding of AI’s capabilities but also introduced me to a valuable tool, which appears to be suitable for students under 13 years old, addressing their specific needs.

Conclusion

When integrating AI into education, a thoughtful and responsible approach is necessary, taking into account the benefits and challenges of AI implementation. By navigating age restrictions ethically and implementing AI tools strategically, educators can harness the power of AI to create personalised and engaging learning experiences that empower students to succeed in the digital age. It is crucial for educators to pay attention to the terms of use for AI tools they are using with learners to ensure they are deliberately taught appropriate use.

Acknowledgements: this post was written with the help of AI Tools (Google Gemini, Magicschools.ai, Quillbot